Zipf’s law is an interesting numerical phenomenon that can be simply expressed by saying that categories of things tend to follow the size of their rank.

Some math will follow, but don’t worry too much about the detail, I am more concerned with the interpretation.

Say there are four categories of apples (Golden delicious, Granny Smith, Honeycrisp, Fuji). Let’s rank these along some sort of criteria, say, redness. The reddest one will have rank 1 and the least red rank 4. Zipf’s law tells us the the ratings are not most likely to be 4, 3, 2, 1, but rather 12, 6, 4, 3, or 1/1, 1/2, 1/3 and 1/4 of the largest category.

When we plot these two things against each other we get the curve for the positive values of 1/x. Recall that rank can only be positive.

What’s particularly neat about this relationship is that if we change the axes to be logarithmic, we get a straight line with a slope of -1.

Now, this series 1/1, 1/2, 1/3… has a special name: The harmonic series. Obviously, this piques my interest as a musician. Why should phenomena as diverse as the distribution of letters in a language, the sizes of earthquakes and the populations of cities follow such a relationship.

What makes this relationship such a fascinating one to study is that these kind of logarithmic relationships are extremely common in almost any phenomena that one could care to count, but also that the interpretation of what they should mean is very murky indeed. George Kingsley Zipf attributed it to the principle of least action, a concept which has a prominent role in physics. I tend to think there is a more fundamental cognitive aspect to it, which is (ironically) what makes it so hard to reflect on.

There are some things that we can observe though. The first is that these relationships are related to the act of counting itself (the harmonic series just being the inverse of the counting numbers, after all). This gives rise to the phenomenon known as Benford’s law, a law that basically says that the first digits of unconnected numbers tend to follow this relationship.

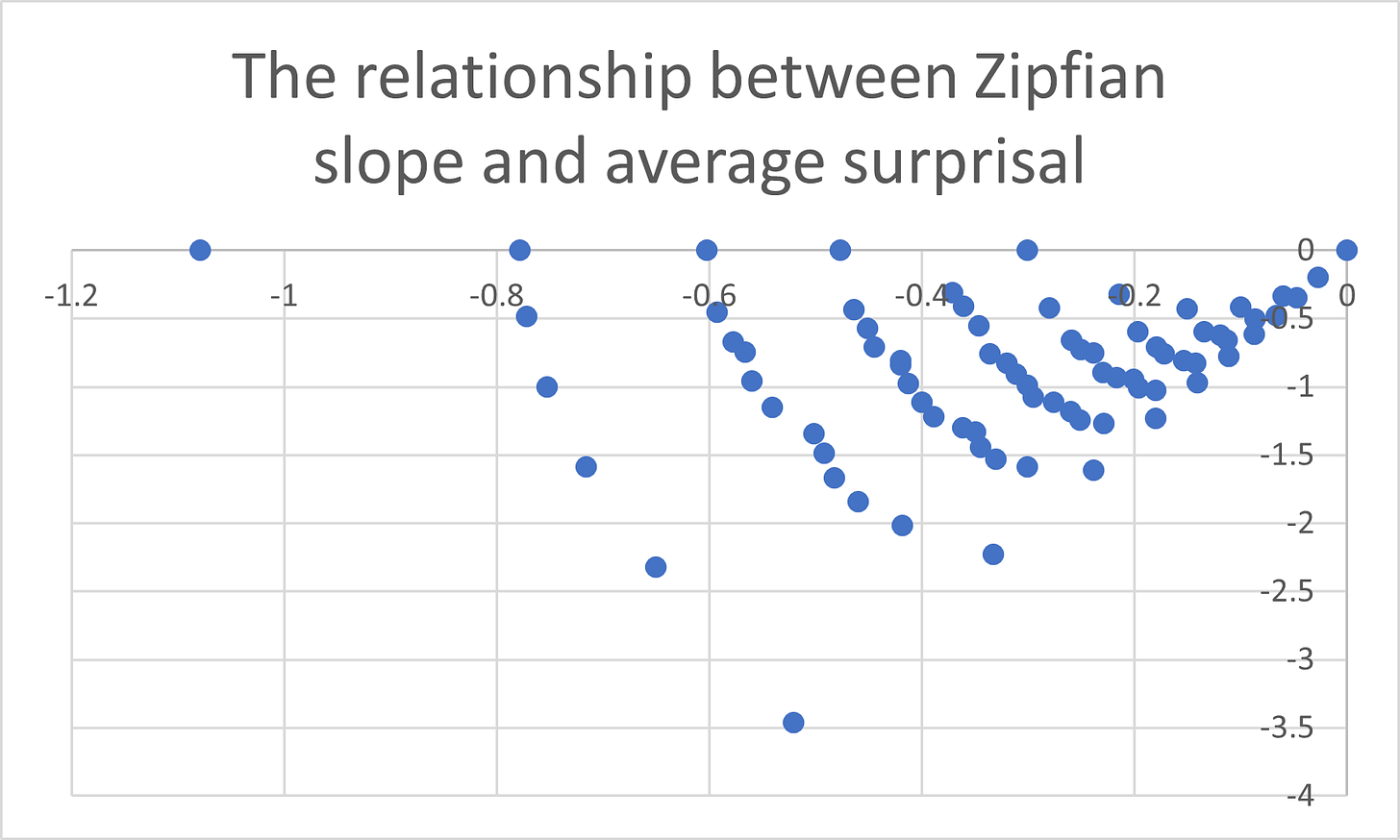

The second in interesting is fact is that Zipf’s law is related to the surprisal I mentioned in a previous post. Entropy, another important physical and information theoretic concept, turns out to be just another way to speak about the combined surprisal of a set of outcomes. So instead of asking how surprised we should be by 5 coin flips landing heads in a row, we want to ask how surprising it would be for 5 heads to come up, the Queen of England waving to her corgi’s and the coffee pot to boil over. One way is the way that I flippantly suggested as a quick and dirty illustrative concept in that earlier post: Just taking the average surprisal. Entropy, meanwhile, is when one adds together all the probabilities of the events times their surprisal (times -1).

Zipfian counting (plotting the frequencies of things against their rank on a logarithmic scale), it turns out, is just another way to join together a set of surprisals and the way it is related to those other ways is what is so interesting. I will just put the charts here as a sort of teaser [sorry] and leave the explanation for next time, but suffice to say that Zipfian counting has properties that make it useful for science in ways that more traditional statistical approaches could never be.