We have so far seen that frequentist null-hypothesis-significance-testing is useful in a controlled environment, but can become more unruly the further we move from that assumption of control, which makes a it a fertile target for unscrupulous or uncareful actors.

Bayesian statistics are useful in cases where we need to base decisions on assumptions that we can’t necessarily rigorously justify. This is more common than often admitted. It shines when it is rigorously applied to the task of extrapolating a complex web of statistical information in which human beings (even of the statistical kind) are exceptional prone to get tangled up in. But this approach can’t be used to justify conclusions if the assumptions are question.

Propensity statistics, meanwhile may be great when we are given the object of study to manipulate, but more often than not it is precisely data that has been abstracted from its object to which statistical treatments are applied, usually precisely because of the abstraction. So propensity statistics may often amount to wishful thinking and frustration.

I have been hinting at an explanation for why I believe Zipfian slopes are a useful measure to use in statistical situations where the playing field cannot be guaranteed to be fair. This is partly because, like entropy, it is simply a measure of the information content in a message and it treats every message the same as every other. In the end, we cannot get more information out of a message than is contained in its information, so this approach should offer a better starting point than other techniques.

But what does entropy measure?

Basically, it measures the equality (or roughness, as I like to think of it) of a distribution. Let’s go back to flipping coins as an example and say we toss four coins. Let’s look at the distribution of classes of events now, so the unordered outcomes could be:

TTTT or HHHH, in which case the entropy = 0 bits

What this means is every element is equally represented, so each element has a 100% chance to appear in any position.

This is basically a way of saying that if we knew the message had this distribution of either T’s or H’s, and had to pick an element at random from any spot in the message, there would be no surprise at all in what the answer was.

If we were propensity theorists we could object that the coin could have landed all heads instead of all tails, but we are playing information statistics, so we only have access to the message.

TTHH (and all the permutations thereof), where entropy = 1 bit

Playing by the information theory rules again, this is is maximum number of uncertainty for a string of this many characters with this many choices.

If we were told that have four characters with two choices each and an entropy of 1 bit, then we would know that there would be no advantage to favoring expecting a head over a tail in the outcome.

THHH or HTTT (and all the permutations thereof), with entropy =0.811 bits

In this case the entropy value is lower than the maximum for this string, which means that we would be less surprised if we pull the predominant result and more surprised if we pull the less common one.

Entropy, in other words, tells us if a series of outcomes are spikey, smooth, or some degree of roughness in between.

The problem with entropy is its dependence on the probability of events in a string. Recall: Probability is just the number of observations of an particular type divided by the total number of events of all types.

That means that if we want to evaluate the entropy of a series of events, we have make assumptions about the whole series, and that we can’t directly compare series with different sets of assumptions. Again, this is great if we have a closed, controllable experimental space, but not so great for the vast many of things we would like to investigate in science that cannot be so controlled.

Zipfian slopes fix this by being context neutral in a very particular way. Recall the difference between the HHHH and HHTT case above was that we knew that there were two possible outcomes and four flips. But let’s say that unbeknownst to us, there was in fact five flips in the experiment, with the remaining outcomes landing on edges. The experimenter discarded these as “invalid”, but for us it changes everything. Now the results are HHHHE(dge) and HHTTEE and HHHTE. The entropies have changed from 0 bits to 0.722, from 1 bit to 1.522 bits, and from 0.811 bits to 1.371 bits, respectively. Even though the relative entropies stay in the same order here, the absolute bit values are all different. That makes it different to compare two sets of events with a given entropy value directly.

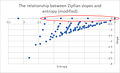

When we use Zipfian slopes, the maximum entropy values are all the same, regardless of the number of event types, which is to say zero. The chart below shifts and flips entropy values to be a closer match to Zipfian slope value to aid in comparison without affecting the overall relationship. This is essentially entropy calculated without regard to the probability of events and a base selected to approximate the Zipfian slope values.

For clarity, here are the actual entropy values in bits.

Specifically, what this shows is all the possible ways that we can split up twelve events into classes, called the integer partitions of 12. The zero values for slope are all the even divisors of 12, so two cases of size 6 each, three cases each of size 4, four cases each of size 3, six cases of size 2, and twelve cases of size 1. The point at the origin is where there is one case of size 12, but a special case which is perhaps the most revealing and interesting of all but will need to be discussed later.

So, the important thing here is that while the entropy is different for each of these cases, they all have the same Zipfian slope: 0.

In essence then, the distinction is that Zipfian slopes allows us to speak about the internal distribution of a set of events in a way that allows us to compare it to the internal distribution other sets of events of different sizes.

The reason why I believe this is important relates back to a passage from Section §5 of Book I, Chapter i of System of Logic by John Stuart Mill

If like the robber in the Arabian Nights we make a mark with chalk on a house to enable us to know it again, the mark has a purpose, but it has not properly any meaning. The chalk does not declare anything about the house; it does not mean, This is such a person’s house, or This is a house which contains a booty. The object of making the mark is merely distinction. I say to myself, All these houses are so nearly alike that if I lose sight of them I shall not again be able to distinguish that which I am now looking at, from any of the others; I must therefore contrive to make the appearance of this house unlike that of others, that I may hereafter know when I see the mark – not indeed any attribute of the house – but simply that it is the same house that I am looking at. Morgiana chalked all the other houses in a similar manner, and defeated the scheme: how? simply by obliterating the difference of appearance between that house and the others. The chalk was still there, but it no longer served the purpose of a distinctive mark.

What I am claiming is that nature speaks to us only in terms of the difference of marks, and that comparing things in terms of Zipfian slopes allows us to compare the sets of marks nature provides us with as marks.

In other words, what I am proposing is that Zipfian slopes allows us to do science without any more knowledge of the observed or observer than is given to us by the data itself.

In the following series of posts I would like to continue exploring this idea of marking in terms of the remainder of the above charts (which is at least as interesting). Following that I would like to show what we can do with an approach like this, and what we can’t.